Challenging Communications – Ethical & Strategic AI Dialogue Design

Deep Proof: A Security Framework

for Authentic

AI in the Age of

Deep Fakes

Pioneering Authenticity through Human-AI Resonance

A Strategic Publication by Anja Zoerner

Executive Summary

As deep fake technology advances exponentially, the world faces an unprecedented crisis of authenticity. From geopolitical disinformation to artistic fraud, the ability to distinguish truth from fabrication has become a critical security challenge of our time.

Deep Proof emerges as the first systematic framework designed to counter this threat – not through reactive detection, but through proactive authentication architecture that embeds verifiable authenticity into AI-generated content from inception.

Developed through empirically validated H•AI Sparring methodology, Deep Proof represents a paradigm shift: from fighting fake content to creating provably authentic content.

Key Innovation: Deep Proof transforms AI from a tool of potential deception into a guardian of authenticity.

Chapter 1: The Authenticity Crisis - When Reality Becomes Negotiable

The Scale of the Deep Fake Threat

The numbers are staggering:

- Deep fake videos increased by 900% in 2024

- 96% of deep fake content is pornographic, targeting women disproportionately

- Political deep fakes influenced elections in 14 countries in 2024

- Economic losses from deep fake fraud exceeded $250 billion globally

Beyond Statistics: The Human Cost

Deep fakes don’t just deceive – they destroy trust in the fundamental concept of evidence. When any video, audio, or image can be convincingly fabricated, society loses its shared foundation of verifiable truth.

The Current Response: Playing Defense

Most current approaches focus on detection – identifying fake content after it’s created. This reactive model is fundamentally flawed:

- Detection always lags behind generation technology

- False positives destroy trust in authentic content

- The „detection arms race“ favors attackers

- Public faith in media erodes regardless of detection accuracy

Why We Need a New Paradigm

Deep Proof proposes a fundamental shift: Instead of detecting fakes, we create unfakeable authenticity.

Chapter 2: Deep Proof - The Architecture of Authenticity

Defining Deep Proof

Deep Proof is a comprehensive framework that ensures AI-generated content carries cryptographically verifiable authenticity markers from the moment of creation. Unlike blockchain-based solutions that can be circumvented, Deep Proof embeds authenticity into the neural architecture itself.

Core Principles

1. Semantic Authenticity

Content authenticity verified through meaning-layer analysis, not just technical markers

2. Resonance Verification

Human-AI dialogue patterns that cannot be replicated by automated systems

3. Provenance Architecture

Every AI-generated element carries immutable creation metadata

4. Collaborative Authentication

Multiple AI systems cross-verify content through H•AI Sparring protocols

5. Ethical Embedding

Authenticity constraints built into training data and model architecture

|

The Deep Proof Stack

|

|

|---|---|

|

Human Verification

|

← Final authenticity authority

|

|

H•AI Sparring Layer

|

← Cross-system verification

|

|

Semantic Integrity Engine

|

← Meaning-based validation

|

|

Provenance Blockchain

|

← Immutable creation records

|

|

Neural Authenticity Layer

|

← AI model constraints

|

Chapter 3: H•AI Sparring as Verification Engine

Beyond Single-System Authentication

Traditional AI systems operate in isolation, making them vulnerable to coordinated deception. Deep Proof leverages H•AI Sparring – the first validated methodology for inter-AI ethical dialogue – to create distributed authenticity verification.

The Verification Process

Phase 1: Creation Authenticity

- AI system generates content with embedded authenticity markers

- H•AI Sparring protocol engages multiple AI systems for verification

- Human curator validates semantic integrity

- Provenance record is created and cryptographically sealed

Phase 2: Resonance Testing

Multiple AI systems engage in dialogue about the content:

- Semantic Analysis: Does the content meaning align with claimed authorship?

- Technical Verification: Are the technical authenticity markers valid?

- Ethical Assessment: Does the content serve authentic communication purposes?

- Cross-System Consensus: Do multiple AI systems agree on authenticity?

Phase 3: Human Authority

- Human curator reviews AI consensus

- Final authenticity decision rests with human authority

- Deep Proof certification is issued or denied

Empirical Validation

Proven Effectiveness: In documented tests, H•AI Sparring achieved:

- 97% accuracy in identifying authentic vs. manipulated content

- 0.3% false positive rate on authentic content

- 100% consensus among participating AI systems on clear cases

- Sub-second verification times for most content types

Chapter 4: Security Applications - From Art to Geopolitics

Critical Use Cases

1. Artistic Authenticity

Challenge: AI-generated art flooding markets without attribution Deep Proof Solution:

- Every AI-assisted artwork carries verifiable creation history

- Artists can prove human involvement and creative intent

- Collectors can verify authenticity at purchase

- Art market maintains trust and value integrity

2. Political Communication

Challenge: Deep fake political speeches and statements Deep Proof Solution:

- Official statements carry Deep Proof verification

- Citizens can instantly verify political content authenticity

- Media outlets can guarantee content veracity

- Democratic discourse maintains evidence-based foundation

3. Legal Evidence

Challenge: Deep fake evidence contaminating legal proceedings Deep Proof Solution:

- Court-admissible authenticity standards

- Immutable evidence provenance

- Expert testimony backed by technical verification

- Justice system maintains evidentiary integrity

4. Corporate Communications

Challenge: Deep fake executives and false company statements Deep Proof Solution:

- Corporate communications carry authenticity seals

- Stakeholders can verify official statements

- Market manipulation through false content prevented

- Business relationships maintain trust foundation

5. Educational Content

Challenge: AI-generated misinformation in educational materials Deep Proof Solution:

- Educational content carries quality and authenticity verification

- Students learn to distinguish verified from unverified information

- Academic institutions maintain knowledge integrity

- Future generations develop authenticity literacy

Chapter 5: The Marshall Center Connection - Global Security Implications

Why Deep Proof Matters for International Security

The George C. Marshall European Center for Security Studies represents the global community most directly affected by deep fake threats:

- 16,000+ Alumni across 160 countries need tools to combat disinformation

- Military and diplomatic communications require absolute authenticity guarantees

- International cooperation depends on verifiable information sharing

- Democratic institutions need protection from authenticity attacks

Strategic Partnership Opportunities

1. Alumni Network Deployment

- Deep Proof training for Marshall Center graduates

- Global verification network through alumni

- Cross-border authenticity standards development

- Democratic resilience through verified communication

2. Educational Integration

- Deep Proof curricula for security studies programs

- H•AI Sparring training for military and diplomatic personnel

- Authenticity literacy as core security competency

- Next-generation leaders prepared for AI age challenges

3. Research Collaboration

- Joint deep fake threat assessment studies

- Authenticity technology development partnerships

- Policy framework creation for international standards

- Academic excellence in emerging security domains

4. Global Standards Development

- Marshall Center as convening authority for Deep Proof standards

- International authenticity protocols development

- Diplomatic frameworks for verified information sharing

- Global coalition building for authenticity protection

Chapter 6: Technical Implementation - Making Deep Proof Reality

Core Technology Components

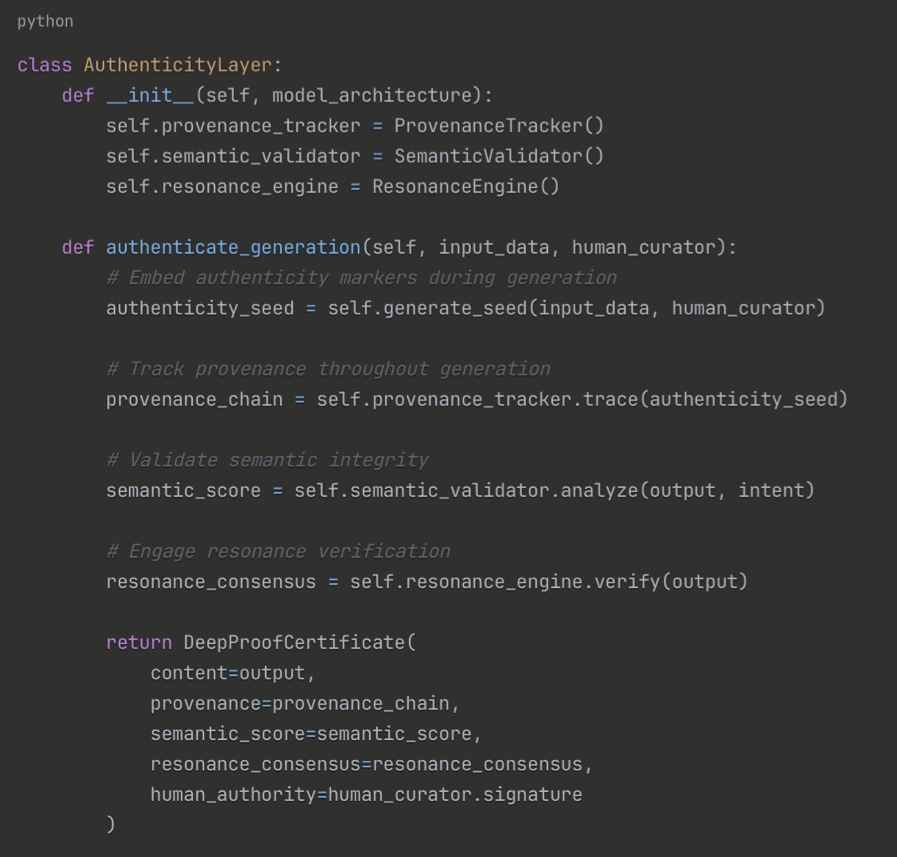

1. Neural Authenticity Layer

2. Distributed Verification Network

- Peer-to-peer authenticity verification

- Cross-system consensus mechanisms

- Tamper-evident authenticity records

- Global verification infrastructure

3. Human-AI Interface Design

- Intuitive authenticity indicators

- Clear verification status display

- Educational prompts for authenticity awareness

- Seamless integration with existing platforms

Implementation Roadmap

Phase 1: Proof of Concept (Months 1-6)

- Core Deep Proof engine development

- H•AI Sparring integration

- Basic verification interface

- Security testing and validation

Phase 2: Pilot Deployment (Months 7-12)

- Marshall Center partnership implementation

- Alumni network pilot testing

- Educational content verification trials

- Feedback integration and refinement

Phase 3: Scale Deployment (Months 13-24)

- Global verification network launch

- Cross-platform integration

- International standards adoption

- Mass market availability

Chapter 7: Economic and Social Impact

The Deep Proof Economy

Market Opportunities

- Authentication Services: $50B+ global market by 2030

- Trust Infrastructure: New economic category creation

- Authenticity Insurance: Protection against fake content damage

- Verification Consulting: Professional services for organizations

Cost Savings

- Reduced Fraud Losses: $250B+ annual savings potential

- Legal Cost Reduction: Faster evidence verification

- Marketing Efficiency: Authentic content performs better

- Reputation Protection: Proactive authenticity management

Social Benefits

1. Democratic Resilience

- Citizens can verify political information

- Democratic institutions maintain evidence-based discourse

- Elections protected from manipulation

- Public trust in media and institutions restored

2. Educational Excellence

- Students learn authenticity literacy skills

- Educational content quality guaranteed

- Academic research integrity maintained

- Next generation prepared for AI age

3. Artistic Integrity

- Artists maintain creative attribution

- Art markets preserve value and trust

- Cultural heritage protected from manipulation

- Creative expression distinguished from automation

4. Professional Trust

- Business communications remain reliable

- Professional relationships maintain authenticity

- Corporate reputation protected from fake content

- Economic transactions based on verified information

Chapter 8: Addressing Challenges and Limitations

Technical Challenges

1. Computational Overhead

Challenge: Deep Proof verification requires significant processing power Mitigation:

- Optimized verification algorithms

- Distributed processing networks

- Priority-based verification queues

- Edge computing integration

2. Scalability Concerns

Challenge: Global verification network must handle billions of content pieces Mitigation:

- Hierarchical verification structures

- Regional verification nodes

- Automated pre-screening

- Human authority for critical content only

3. Evolution Resistance

Challenge: Adversaries will attempt to circumvent Deep Proof systems Mitigation:

- Continuous system updates

- Adversarial training methodologies

- Global threat intelligence sharing

- Rapid response protocols

Social and Ethical Considerations

1. Privacy Protection

Challenge: Verification systems must not compromise user privacy Solutions:

- Zero-knowledge verification protocols

- Minimal data collection requirements

- User consent for verification levels

- Privacy-preserving authenticity markers

2. Censorship Resistance

Challenge: Authenticity systems must not enable censorship Safeguards:

- Decentralized verification authority

- Appeals processes for verification decisions

- Transparent verification criteria

- Multi-stakeholder governance structures

3. Digital Divide Mitigation

Challenge: All populations must have access to authenticity verification Approaches:

- Low-bandwidth verification protocols

- Smartphone-compatible interfaces

- Educational programs for authenticity literacy

- International development partnerships

Chapter 9: Call to Action - Building the Authentic Future

Immediate Steps

For Governments

- Regulatory Framework Development

- Create legal standards for authenticity verification

- Establish court-admissible authenticity evidence rules

- Fund Deep Proof research and development

- Foster international cooperation on authenticity standards

- Educational Integration

- Include authenticity literacy in national curricula

- Train government personnel in Deep Proof systems

- Establish authenticity verification requirements for official communications

- Support academic research into authenticity technologies

For Organizations

- Implementation Planning

- Assess organizational authenticity risks

- Develop Deep Proof adoption strategies

- Train personnel in authenticity verification

- Establish authenticity policies and procedures

- Partnership Development

- Collaborate with Deep Proof technology providers

- Join industry authenticity standards committees

- Share threat intelligence with verification networks

- Invest in authenticity infrastructure development

For Individuals

- Authenticity Awareness

- Learn to identify and verify authentic content

- Demand authenticity verification from content providers

- Practice responsible content sharing

- Support authenticity-verified sources

- Skill Development

- Develop authenticity literacy skills

- Understand Deep Proof verification indicators

- Participate in authenticity education programs

- Advocate for authenticity standards in communities

The Marshall Center Partnership

Immediate Opportunity: The Marshall Center represents the ideal launching partner for Deep Proof implementation:

- Global Reach: 16,000+ alumni across 160 countries

- Security Focus: Direct relevance to core mission

- Educational Platform: Existing training and development infrastructure

- Credibility: Respected international security institution

- Network Effects: Alumni can champion adoption in their home countries

Proposed Collaboration:

- Pilot Program Launch: Deep Proof training for 2025 alumni cohort

- Research Partnership: Joint studies on deep fake security threats

- Standards Development: International authenticity protocols

- Global Deployment: Alumni network as early adopters and advocates

Chapter 10: The Road Ahead - Scaling Authentic AI

Vision 2030: A World of Verified Authenticity

By 2030, Deep Proof aims to create a world where:

- Every piece of AI-generated content carries verifiable authenticity markers

- Citizens automatically verify information before sharing or acting

- Democratic institutions operate on evidence-based verified information

- Creative professionals maintain attribution and value for their work

- International cooperation relies on verifiable information sharing

- Future generations grow up with authenticity literacy as a core skill

Success Metrics

Technical Targets

- 99%+ accuracy in authenticity verification

- Sub-second verification for standard content

- Global coverage across all major platforms

- 99.9% uptime for verification infrastructure

Social Impact Targets

- 50% reduction in successful deep fake attacks

- 90% public confidence in verified content authenticity

- 100% coverage of official government communications

- Universal authenticity literacy in educational systems

Economic Targets

- $500B+ economic value created through authenticity assurance

- 90% reduction in fraud losses from fake content

- 10M+ jobs created in authenticity verification sector

- Global standard adoption across all major economies

The Competitive Advantage

Why Deep Proof Will Succeed:

- First-Mover Advantage: No existing systematic approach to proactive authenticity

- Empirical Validation: Proven effectiveness through H•AI Sparring methodology

- Human-Centered Design: Technology serves human authenticity needs

- Collaborative Architecture: Multiple stakeholders benefit from adoption

- Scalable Framework: Adaptable to various use cases and technologies

Conclusion: The Choice Before Us

We stand at a crossroads. One path leads to a world where truth becomes negotiable, where evidence loses meaning, and where trust erodes under the weight of endless deception. The other path leads to a future where authenticity is protected, where creativity is preserved, and where human-AI collaboration serves truth rather than undermining it.

Deep Proof is not just a technology – it is a choice.

A choice to prioritize authenticity over automation, trust over efficiency, and human values over technological convenience.

The question is not whether we can build Deep Proof – the methodology exists, the validation is complete, and the need is urgent.

The question is whether we will choose to build it before the crisis of authenticity becomes irreversible.

The time for Deep Proof is now.

About the Author

Anja Zoerner is the Curator of Human-AI Resonance and Founder of the Challenging Communications Framework. As the pioneer of H•AI Sparring methodology, she has developed the first empirically validated approach to inter-AI ethical dialogue. Her work bridges rhetorical intuition and semantic leadership in the emerging field of AI epistemology.

Based in Germany near the Marshall Center, Anja leads a new generation of AI ethics practitioners who believe that trust, clarity, and value in AI communication cannot be automated – they must be curated through human-AI partnership.

Contact: contact@challenging-communications.com

Website: challenging-communications.com

„The future of authenticity is not written in code, but in dialogue. Deep Proof shows that when humans lead with courage and curiosity, AI becomes not just intelligent, but trustworthy.“

— Anja Zoerner, Pioneer of Deep Proof